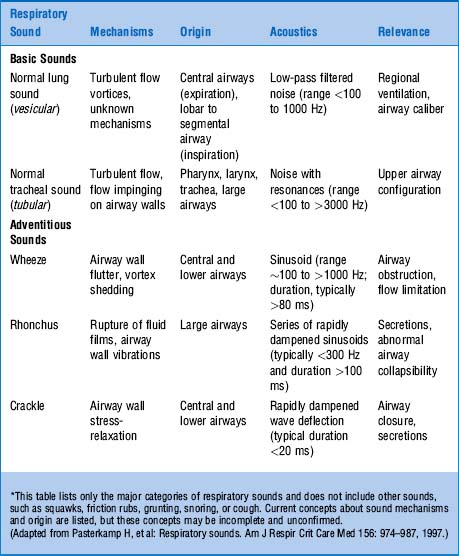

Instead of trying to reproduce a flawed human interpretation of adventitious sounds, directly predicting the diagnosis of a patient from DLA audio would likely learn more objective patterns, and also produce outputs that would be able to guide clinical decision making. Further, as these pathological sounds do not have specific diagnostic/prognostic associations, the clinical relevance of these approaches is limited 14. Thus, integrating the limitations of human perception into the prediction. However, most studies aim to automate the detection of the adventitious sounds that were annotated by humans 11, 12, 13. Several studies have sought to automate the interpretation of digital lung auscultations (DLA) 6, 7, 8, with several more recent ones using deep learning models such as CNNs 9, 10. The most common choice being a spectrogram, which is a visual representation of the audio frequency over time. Audio is often transformed into a 2D image format during standard processing. In particular, convolutional neural networks (CNNs) adapted for audio signals have achieved state-of-the-art performance in speech recognition 3, sound event detection 4, and audio classification 5. Many of these new advances were ported from the field of computer vision. Deep learning has the potential to discriminate audio patterns more objectively, and recent advances in audio signal processing have shown its potential to out-perform human perception. Indeed, despite two centuries of experience with conventional stethoscopes, during which time it has inarguably become one of the most ubiquitously used clinical tools, several studies have shown that the clinical interpretation of lung sounds is highly subjective and varies widely depending on the level of experience and specialty of the caregiver 1, 2. While there are some etiological associations with these sounds, the causal nuances are difficult to interpret by humans, due to the diversity of differential diagnoses and the non-specific, unstandardized nomenclature used to describe auscultation 1. The restriction of air flow in the variously sized passageways creates distinct patterns of sound that are detectable with stethoscopes as abnormal, “adventitious” sounds such as wheezing, rhonchi and crackles that indicate airflow resistance or the audible movement of pathological secretions. Respiratory diseases are a diverse range of pathologies affecting the upper and lower airways (pharynx, trachea, bronchi, bronchioles), lung parenchyma (alveoli) and its covering (pleura). DeepBreath provides a framework for interpretable deep learning to identify the objective audio signatures of respiratory pathology. Temporal attention showed clear alignment between model prediction and independently annotated respiratory cycles, providing evidence that DeepBreath extracts physiologically meaningful representations. All either matched or were significant improvements on a clinical baseline model using age and respiratory rate. Similarly promising results were obtained for pneumonia (AUROC 0.75 ± 0.10), wheezing disorders (AUROC 0.91 ± 0.03), and bronchiolitis (AUROC 0.94 ± 0.02). DeepBreath differentiated healthy and pathological breathing with an Area Under the Receiver-Operator Characteristic (AUROC) of 0.93 (standard deviation ± 0.01 on internal validation). To ensure objective estimates on model generalisability, DeepBreath is trained on patients from two countries (Switzerland, Brazil), and results are reported on an internal 5-fold cross-validation as well as externally validated (extval) on three other countries (Senegal, Cameroon, Morocco). Patients were either healthy controls (29%) or had one of three acute respiratory illnesses (71%) including pneumonia, wheezing disorders (bronchitis/asthma), and bronchiolitis).

It comprises a convolutional neural network followed by a logistic regression classifier, aggregating estimates on recordings from eight thoracic sites into a single prediction at the patient-level. We used 35.9 hours of auscultation audio from 572 pediatric outpatients to develop DeepBreath : a deep learning model identifying the audible signatures of acute respiratory illness in children.

Computer-aided analysis has the potential to better standardize and automate evaluation. The interpretation of lung auscultation is highly subjective and relies on non-specific nomenclature.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed